Key Takeaways:

- Agentic AI is moving from pilot to production with 67% of institutional traders prioritizing AI/ML capabilities

- Explainable AI is now a regulatory requirement per FINRA’s 2026 Report, not an optional feature

- Bias mitigation frameworks are essential for AI credit scoring compliance in regulated lending

- The 30/90/365-day implementation roadmap helps CFOs move from experiment to integration

- Quantum-enhanced AI improves fraud detection accuracy by 25-40% while reducing false positives

AI in Finance Trends 2026: The CFO’s Guide to Responsible, Scalable Implementation

AI in finance trends 2026 represent a fundamental shift from experimental pilots to core operational infrastructure. Finance leaders who mastered AI integration in 2025 are now seeing measurable returns, while organizations still in evaluation mode face widening competitive gaps. The data shows 56% of finance leaders now use AI, but only 17% have embedded it in core workflows. This disparity creates both risk and opportunity for CFOs making investment decisions this year.

The regulatory landscape has matured significantly. FINRA’s 2026 Annual Report treats generative AI as a supervised technology requiring full governance frameworks. This means audit trails, human-in-the-loop validation, and documented oversight for any AI influencing customer outcomes or firm decisions. Organizations without these controls face compliance exposure that could outweigh AI efficiency gains.

This guide examines seven critical trends shaping finance AI adoption in 2026 and beyond. Each section includes practical implementation checklists, real-world case studies, and measurable success metrics. The goal is providing CFOs, finance VPs, and compliance officers with actionable intelligence for moving from pilot to production.

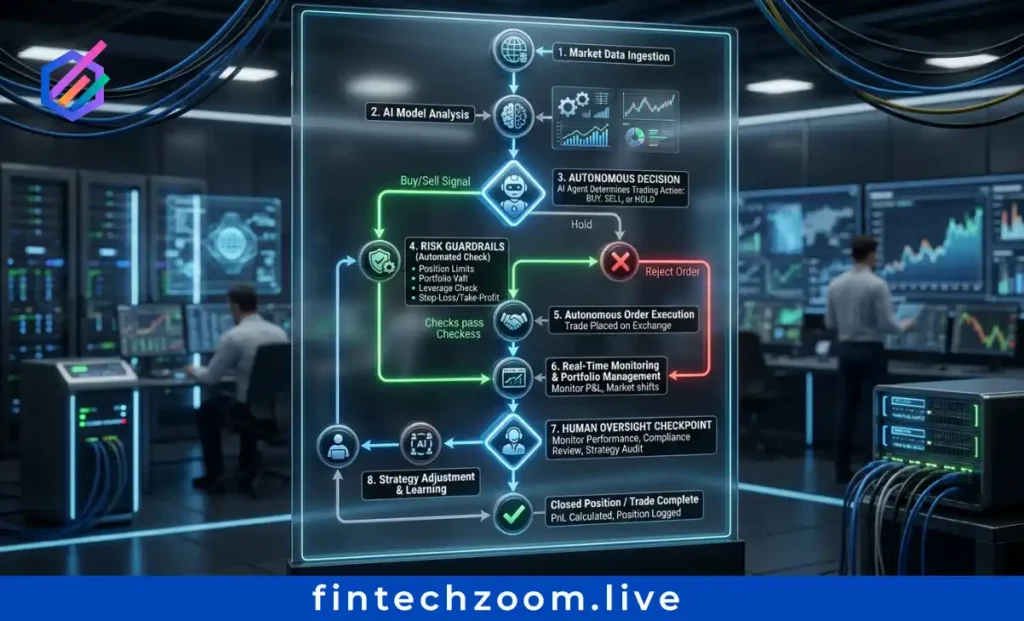

Agentic AI Moves From Concept to Core Trading Infrastructure

Agentic AI represents autonomous systems that plan, execute, and learn without constant human prompting. Unlike traditional automation following predefined rules, agentic AI makes decisions based on real-time market conditions, risk parameters, and strategic objectives. J.P. Morgan’s 2025 e-Trading Edit shows 67% of traders now prioritize AI/ML capabilities, signaling institutional confidence in autonomous execution systems.

Key Use Cases Gaining Traction:

- Autonomous portfolio rebalancing agents that adjust holdings based on volatility thresholds, correlation shifts, and tax optimization rules without manual intervention

- Real-time risk-adjusted execution algorithms that slice orders across venues while minimizing market impact and tracking error

- Multi-asset class arbitrage agents that identify pricing inefficiencies across equities, fixed income, and derivatives simultaneously

Implementation Checklist for Agentic AI:

- Define clear guardrails for autonomous decision-making authority

- Install kill switches with human override capabilities at multiple levels

- Establish human oversight protocols with defined escalation triggers

- Create audit trails capturing all agent decisions and rationale

- Test agents in sandbox environments before production deployment

Organizations deploying agentic AI report 30-45% reduction in execution latency and 15-25% improvement in trade quality metrics. However, these gains require robust governance frameworks to prevent unintended market behavior or compliance breaches.

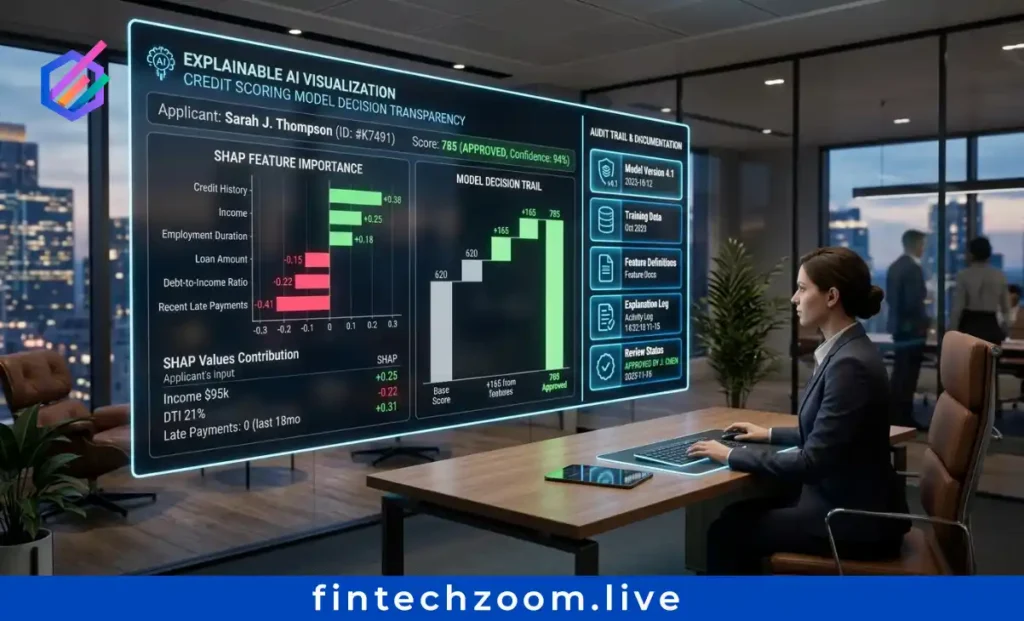

Explainable AI (XAI) Becomes a Regulatory Requirement, Not a Nice-to-Have

FINRA’s 2026 Annual Regulatory Oversight Report explicitly classifies AI as a supervised technology requiring full governance. This designation means financial institutions must maintain complete audit trails for AI-driven decisions affecting customers or firm operations. Black-box models no longer satisfy regulatory expectations for transparency and accountability.

What “Explainable” Means in Practice:

- Prompt and output logging for all generative AI interactions with customer data or investment recommendations

- Model decision transparency satisfying Reg BI and fiduciary duty requirements for investment advice

- Human-in-the-loop validation documentation showing oversight of AI-generated outputs before customer delivery

Technical Approaches for XAI Compliance:

Several methods enable explainability in financial AI models. LIME (Local Interpretable Model-agnostic Explanations) approximates complex model behavior with interpretable surrogate models. SHAP (SHapley Additive exPlanations) quantifies feature contributions to individual predictions. Counterfactual explanations show how input changes would alter model outputs, helping compliance teams understand decision boundaries.

Case Study: Mid-Sized Bank XAI Implementation

A regional bank implemented XAI for credit decisions to satisfy FCA requirements. The institution deployed SHAP values for all loan approval models, creating explainability reports for each decision. This approach reduced regulatory inquiry response time from 14 days to 48 hours while maintaining predictive accuracy. The bank now uses explainability reports as customer communication tools, improving transparency and trust.

Organizations investing in XAI infrastructure report 40% faster regulatory audit completion and 60% reduction in model validation cycle time. The upfront investment pays dividends in compliance efficiency and stakeholder confidence.

Bias Mitigation Frameworks for AI Credit Scoring Go Mainstream

Historical lending data perpetuates disparities that AI models can amplify without intentional mitigation. Regulators are watching closely. The Consumer Financial Protection Bureau issued guidance in 2025 requiring fair lending compliance for AI-driven credit decisions. Organizations using AI for underwriting must demonstrate proactive bias detection and remediation.

New Scalable Frameworks for Bias Detection:

Recent frameworks enable scalable bias detection and mitigation in AI credit models while maintaining predictive accuracy. These approaches address three critical phases: pre-training data auditing, model optimization with fairness constraints, and post-deployment monitoring for disparate impact.

Practical Steps for Bias Mitigation:

- Pre-training data auditing protocols that identify protected class correlations and historical bias patterns before model development

- Fairness constraints in model optimization that penalize disparate impact during training without sacrificing accuracy

- Post-deployment monitoring for disparate impact with automated alerts when approval rates diverge across demographic segments

Tools Spotlight: Open-Source vs. Enterprise Platforms

Open-source bias detection libraries like AIF360 and Fairlearn provide accessible starting points for smaller institutions. Enterprise platforms from IBM, Google, and Microsoft offer integrated governance workflows with regulatory reporting capabilities. The choice depends on institution size, regulatory exposure, and existing technology stack.

ROI Angle: Reduced Regulatory Risk Plus Expanded Addressable Market

Bias mitigation delivers dual value. First, it reduces regulatory enforcement risk and associated penalties. Second, fairer lending expands the addressable market by approving qualified borrowers previously excluded due to biased historical patterns. Institutions report 10-15% portfolio expansion after implementing bias-aware AI credit scoring while maintaining default rates.

Generative AI Governance: From ChatGPT Experiments to Enterprise-Grade Workflows

Current usage data shows ChatGPT leads at 35% adoption among finance teams, but specialized finance AI tools lag significantly. This gap indicates widespread experimentation without structured governance. The problem: 68% of CFOs report uncertainty about where to start with AI adoption, creating inconsistent implementation across departments.

The Governance Gap and Its Risks:

Uncontrolled generative AI usage creates multiple exposure points. Customer data may enter public models without consent. AI-generated content may contain errors or compliance violations. Third-party AI tools may lack adequate security controls. Organizations need formal governance before scaling AI adoption.

Practical Governance Framework:

- Use case pre-approval workflows requiring compliance review before deploying AI in customer-facing or regulated contexts

- Data ingestion policies defining what customer data can train models and what must remain excluded

- Output review protocols for customer-facing content requiring human validation before delivery

- Vendor management for third-party AI tools including security assessments, data handling reviews, and contractual protections

Tool Comparison: ChatGPT Enterprise vs. Microsoft Copilot vs. Specialized Finance AI Platforms

ChatGPT Enterprise offers data privacy protections and administrative controls suitable for general business use. Microsoft Copilot integrates with Office 365 workflows, reducing friction for document creation and analysis. Specialized finance AI platforms from Bloomberg, Refinitiv, and emerging vendors provide domain-specific capabilities with built-in compliance features. Selection depends on use case complexity and regulatory requirements.

Organizations with formal AI governance report 50% faster deployment cycles and 70% fewer compliance incidents compared to peers without structured oversight. Governance enables scale rather than restricting it.

AI + Blockchain Convergence Creates Auditable, Programmable Finance

AI needs verifiable data sources. Blockchain needs intelligent automation. Their convergence addresses mutual limitations while creating new capabilities for institutional finance. This trend gains traction as regulatory clarity improves for both technologies.

Why Convergence Matters:

AI models require trusted data inputs for reliable outputs. Blockchain provides immutable audit trails verifying data provenance. Smart contracts need conditional logic for complex execution. AI provides adaptive decision-making beyond simple if-then rules. Together they create programmable finance with built-in accountability.

Use Cases Gaining Traction:

- Tokenized assets with AI-driven valuation models that update prices based on market conditions while maintaining blockchain audit trails

- Smart contracts with AI-triggered execution conditions that respond to complex market signals rather than simple threshold breaches

- Cross-border payments with AI-optimized routing plus blockchain settlement reducing costs while improving transparency

Technical Considerations:

Oracle design determines how off-chain data enters blockchain systems. Poor oracle design creates manipulation vulnerabilities. Privacy-preserving computation enables AI analysis without exposing sensitive data on-chain. Regulatory alignment requires coordination between AI governance frameworks and blockchain compliance requirements.

Early Adopter Advantage:

Institutions building AI-blockchain infrastructure now position themselves for next-generation finance stack ownership. Early movers capture market share in tokenized securities, programmable payments, and automated compliance. Late adopters face integration costs and competitive disadvantages as standards crystallize.

From Month-End Close to Continuous Accounting: AI Enables Real-Time Finance

Finance teams traditionally spend excessive time closing books rather than analyzing results. This pattern limits strategic partnership value. AI changes the equation by enabling continuous processes that eliminate batch processing delays.

How AI Changes the Close Process:

- Continuous reconciliation vs. batch processing that matches transactions in real-time rather than waiting for month-end cycles

- Real-time variance analysis with automated root-cause suggestions that flag anomalies immediately with contextual explanations

- Predictive cash flow forecasting updated hourly, not quarterly enabling proactive liquidity management

Implementation Roadmap:

Start with one workflow demonstrating clear ROI. Spend categorization works well for initial pilots. Measure time saved, error reduction, and decision speed improvements. Document results and build business case for expansion. Scale to adjacent workflows after proving value in initial deployment.

Case Study: Spendesk’s AI-Powered Continuous Close

Spendesk implemented AI-powered continuous close enabling real-time decision-making for mid-market clients. The platform automates expense categorization, receipt matching, and policy compliance checking. Clients report 60% reduction in close cycle time and 40% improvement in forecast accuracy. Finance teams redirect saved time to strategic analysis and business partnership activities.

Organizations achieving continuous accounting report 50% faster month-end close and 35% improvement in forecast accuracy. The shift from backward-looking reporting to forward-looking insights transforms finance function value.

Quantum-Enhanced AI for Next-Generation Fraud Detection

Fraudsters deploy sophisticated tools including deepfakes and synthetic identities. Defenders need quantum-scale pattern recognition to keep pace. Hybrid quantum-AI systems model complex relationships across millions of variables that classical systems cannot process efficiently.

How Hybrid Quantum-AI Systems Work:

Quantum computing enables parallel processing of multiple probability states simultaneously. AI algorithms leverage this capability to identify fraud patterns across vast transaction datasets. The combination detects subtle correlations invisible to classical systems while processing at speeds enabling real-time intervention.

Results from Early Deployments:

Organizations testing quantum-AI fraud detection report 25-40% improvement in detection accuracy and 60% reduction in false positives. These gains translate directly to reduced fraud losses and improved customer experience from fewer legitimate transaction declines.

Practical Adoption Paths:

Cloud-based quantum-AI services from IBM, Google, and AWS provide accessible entry points without capital investment in quantum hardware. On-premise infrastructure suits institutions with strict data residency requirements or high transaction volumes justifying dedicated systems. Most organizations start with cloud services before evaluating on-premise options.

Risk Consideration: Dual-Use Implications

Quantum computing threatens current encryption standards while enabling advanced fraud detection. Institutions must plan for post-quantum cryptography migration alongside quantum-AI adoption. The timeline for quantum decryption risk remains uncertain, but preparation costs less than reactive remediation after breaches.

The CFO’s 30/90/365-Day AI Implementation Roadmap

First 30 Days: Scope and De-Risk

Identify one high-friction workflow with clear ROI potential. Reconciliations, variance analysis, and spend categorization work well for initial pilots. Audit existing tech stack for embedded AI features before purchasing new tools. Many organizations already own AI capabilities they do not fully utilize. Establish baseline metrics measuring time saved, error reduction, and decision speed before implementation begins.

Next 90 Days: Build Structure and Governance

Launch automate-upskill-govern plan with clear ownership assignments. Appoint AI champions in finance, IT, and compliance departments. Draft initial governance policies covering data usage, model validation, and human oversight requirements. Begin upskilling finance team members on prompt engineering and AI literacy. Document all AI use cases with risk ratings and approval status.

6-12 Months: Scale and Embed

Build a governed finance data core recognizing AI performance depends on data quality. Redesign roles around AI capabilities, shifting from task execution to judgment and partnership. Standardize proven use cases across teams, moving AI from innovation project to infrastructure component. Measure strategic partnership value through stakeholder satisfaction surveys and business outcome metrics.

Organizations following structured roadmaps report 3x faster time-to-value compared to ad-hoc AI adoption. Planning enables scale while managing risk appropriately.

Critical Success Factors Most Teams Overlook

Data Quality Over Model Complexity

AI amplifies data issues rather than fixing them. Poor input data produces unreliable outputs regardless of model sophistication. Invest in data cleansing, standardization, and governance before deploying advanced AI capabilities. Organizations with strong data foundations achieve better AI results with simpler models.

Change Management Is 80% of the Work

Technology implementation succeeds or fails based on people adoption. Upskilling finance teams on prompt engineering and AI literacy requires sustained investment. Create training programs, certification paths, and communities of practice supporting continuous learning. Measure adoption rates alongside technical metrics.

Start With Augmentation, Not Replacement

AI handles routine tasks while humans focus on judgment and relationships. This approach reduces resistance while demonstrating value quickly. Replacement narratives create anxiety that slows adoption. Augmentation messaging emphasizes capability expansion rather than job elimination.

Measure Beyond Time Saved

Track forecast accuracy, stakeholder satisfaction, and strategic partnership value alongside efficiency metrics. Time savings matter, but business impact determines long-term AI investment justification. Organizations measuring comprehensive value secure ongoing funding for AI initiatives.

The Window for AI Leadership Is Narrowing

AI in finance trends 2026 show clear divergence between leaders and laggards. Organizations embedding AI responsibly now define the next decade of financial services. Those delaying face widening competitive gaps and mounting catch-up costs.

The trends covered in this guide provide a roadmap for responsible, scalable AI adoption. Agentic AI, explainable systems, bias mitigation, governance frameworks, blockchain convergence, continuous accounting, and quantum-enhanced fraud detection represent the frontier of finance technology. CFOs who master these capabilities position their organizations for sustained competitive advantage.

Start with one workflow this quarter. Measure results. Build governance. Scale systematically. The window for AI leadership narrows with each passing quarter. Action today determines market position tomorrow.

FAQ Section

Q: What is the #1 AI trend in finance for 2026?

Agentic AI—autonomous systems that plan and execute financial tasks with minimal human prompting—is moving from pilot to production, with 67% of institutional traders now prioritizing AI/ML capabilities.

Q: Is explainable AI required for finance compliance in 2026?

Yes. FINRA’s 2026 Report treats generative AI as a supervised technology, requiring audit trails, human-in-the-loop validation, and documented oversight for any AI influencing customer outcomes or firm decisions.

Q: How do I start implementing AI in finance without knowing where to begin?

Follow the 30/90/365-day roadmap: Pick one high-friction workflow, audit existing tools before buying new ones, measure impact beyond time saved from day one.

Q: What’s the biggest barrier to AI adoption in finance?

68% of CFOs cite uncertainty about where to start as the primary blocker, followed by security concerns and insufficient training.

Q: Can AI help with bias in credit scoring?

Yes, but only with intentional design. New scalable frameworks enable bias detection and mitigation in AI credit models while maintaining predictive accuracy.

External Authority Links

- CFO Connect: State of AI in Finance 2026 report

- FINRA: 2026 Annual Regulatory Oversight Report

- Keyrus/Deloitte: AI trends in financial services

- Academic sources on bias mitigation frameworks